Backup and Restore on NetBSD

Overview

Putting together the bits and pieces of a backup and restore concept, while not being rocket science, always seems to be a little bit ungrateful. Most Admin Handbooks handle this topic only within few pages. After replacing my old Mac Mini's OS by NetBSD, I tried to implement an automated backup, allowing me to handle it similarly to the time machine backups I've been using before. Suggestions on how to improve are always welcome.

Some thoughts about Strategy

The first thing you probably see when reading about these topics is the advice, don't have a backup strategy but a recovery strategy. That is, make sure your backups are actually in a usable shape and be sure you know how to apply them in an emergency. Depending on how much you value your data, you might want to store the backup media in a physically remote place. At least, you should not store it on the same disks to be backed up, but on detachable media or on a remote computer. Also it should be set read-only after the backup is finished, so it cannot accidently be damaged when accessing it.

The next question is how much time and space you want to dedicate to

your backups. When doing a full backup each

time, recovery is easy: just apply the latest backup. On the flip

side, each backup might take a long time and much storage space. So the

other extreme might be to only start with one full backup, afterwards always

backing up only the increments to the previous backup. Then, of course,

the restore is expensive as you need to apply each single backup from

first to last in right order to do a full restore. Tools like

rsync(1) mitigate by merging each increment into the

previous backup, managing a copy of the backed up file system. But

this collides with the requirement of not modifying previous backups.

As a compromise, the manpage of the dump(8) tool suggests to do the next

increment only every nth time (for example every second time)—that is, to generate the diff to

the same preceeding backup for the following two consecutive backups.

Besides that, it suggests generating weekly backups incrementing on

the original full backup. Finally, it suggests to build a new full

backup every four weeks, this way maintaining a three-level strategy

of stacked increments. Then,

in the worst case, like restoring the backup of a cycle's last day, you

need to apply the initial full backup, the last weekly increment and

the daily increments of the third, fifth and seventh day, so you need

to apply at most five backups to do a full restore.

dump(8) allows to define this using backup levels. Level 0 always is a

full backup. Each higher level generates the diff to the last lower

level's backup contents. So applying the dump levels 0 3 2 5 4 7 6

for the first and 1 3 2 5 4 7 6 for the three next weeks

follows the backup plan sketched out above. Of course, you may always

fine-tune this to your needs.

Another plan would be to only backup personal data like your user directories. Then the restore plan would include a fresh OS setup, installing of all software needed and then fetching only the user directories from backup. This doesn't guarantee you get to the same state as before, as you probably haven't tagged the exact versions of all software installed before.

While there are many third party solutions out there, my plan is to

use the on-board capabilities for backup. This way, the restore tools are

in reach without additional installation steps. For instance,

the mini root ram disk of NetBSD's installation kernel at least contains the

restore(8) tool, mentioned below, on board.

As I want to be able to go back also after experimental software

updates, my plan has been to setup a full backup using dump(8) and

restore(8), using the strategy suggested above. After setting up this plan and

seeing my incremental backups are much smaller than the full one (and

even the later weekly increments), I decided to modify the plan

sketched out above and also do the first monthly backup as level 1,

this way doing full backups only on demand (e.g. after a system

upgrade). On the other side, when there are large diffs every day, it

may be more practical to just do a weekly full backup and daily incremental

backups diffing to the previous day. For example, in times when

compiling larger parts of pkgsrc, this seems to make sense.

Accessing a remote backup device

When you don't have a backup tape device, you probably instead should have an external backup medium ready. In the easiest case, that device may be attached directly to your computer, so you can just adress it's device entry.

When it is attached to another computer, there are several options.

The first one would be to use the remote option of dump(8), which

indirectly acesses the remote computer using ssh(1) and rmt(8), so both must be installed

and accessable there. Then you can set environment variable

RCMD_CMD to ssh and address your device by option -f user@host:file.

If, for example, rmt(8) is not available, your next option suggested

by many tutorials would be to pipe to dd(1)

using ssh(1).

dump <options> | ssh -l <user> <host> dd of=/dev/<dump-device>

The pipe for the way back to restore then would be like this:

ssh -l <user> <host> dd if=/dev/<dump-device> | restore -f -

where /dev/dump-device might also be a path to a plain file.

Unfortunately, doing an interactive restore via this sort of piped ssh

seems to be not such a good idea, especially if the backup file is

large. Nevertheless, this might be an option for

doing non-interactive restores.

But if you can ssh(1) into a remote box, the easiest way to get it

within reach would be to just mount it using mount_psshfs(8).

In my case, the backup device is an Apple Time Capsule, being also a

NetBSD-operated device. My first plan, using the remote backup

facility of dump(8), didn't work out because of the missing rmt(8)

command on the time capsule. Perhaps some day I'll try to find a

statically linked rmt(8) binary for NetBSD-6.0/evbarm (or cross-build

it myself). For now, I'm resolving to using mount_afp, provided

by pkgsrc, and mounting the time capsule filesystem to access it in a

less sophisticated way.

BTW, when doing so, I had to manually create a link to /dev/fuse0

(ln -s /dev/putter /dev/fuse0) to make afpfsd work.

Until now, the automatic mounting of the afp device doesn't seem to

work reliably, which kind of counteracts my approach a little bit. I had at

least one case where the afpfsd crashed while dumping.

The second (and more severe) problem with this approach is not being

able to restore from scratch in case of a complete failure. As mentioned, I'd like

to be able to restore from the NetBSD installation mini root

filesystem, which doesn't contain mount_afp. Network tools available there

include rcmd (allowing simple, unsecured remote access via restore,

rexec and rmt), ftp or mount_nfs. For all of them, the

server-side components are missing on the time capsule. So, in case of

a complete restore, my choice will probably be to mount_afp the

backup device onto another system, re-export it from there via nfs and

this way, finally make it reachable for the NetBSD installation mini root.

Snapshots

One downside of using dump(8) is that it cannot reliably take backups from

live file systems. That used to imply the need to go down to single user and

umount the files systems for each backup. Fortunately, NetBSD has a

nice support for file system snapshots courtesy of fssconfig(8),

easing the backup process very much.

As root, for example, use

fssconfig -cv fss0 / /root/snapshot

to snapshot the file system and make the snapshot reachable through

the /dev/fss0 device. The file /root/snapshot is used internally

to manage the snapshot while the filesystems stays live. You can then

mount the device and see the unchanged directory, even if

you change the live filesystem.

fssconfig -l shows the snapshot devices currently in use. With

fssconfig -u you can remove a snapshot after dumping it. Afterwards,

the snapshot file can also be removed.

dump(8) logs the time, device and level of each dump into

/etc/dumpdates. Normally, the file system devices are used here.

But when using fss snapshots, as the fss device name is written into

dumpdates instead, you should always consistently use the same different fss

device numbers when dumping different file systems. For example use fss0 for

root, fss1 for /usr when they are on different mount points, etc.

As I don't want the directory entry for the snapshot to be included

into the dump, I put it into /tmp, which resides on a tmpfs in my

system, so it is guaranteed to not be included into the file system

dumped. When doing

this, an image is generated used as backing store while the snapshot

persists. As this may be too large for the /tmp file system, you can

specify a block size and backing store size in the fssconfig(8) call.

This way, I'm giving a smaller size and then mount the fss

device read-only so that the backing store doesn't overflow.

Restoring

All this work is done to be able to walk the opposite way and restore a

damaged system in case of an emergency. So lets now have a look on restore(8).

It can do full or partial restores and also has an interactive mode.

restore -t -f dump_file

This doesn't modify anything, but just outputs the contents of the backup. This is not only the file and directory names, but also the dump date, level and in case of an incremental backup, the previous level.

When doing a full restore into a fresh file system, prepare it using

newfs(8) before. Afterwards, mount(8) and cd into the new file system,

as the restored files go into the current directory.

restore -rf dump_file

This rebuilds the file system. When a set of incremental dumps is to be

applied, restore(8) needs to pass information between the different runs. So it

creates a restoresymtable file in the root directory storing infos about it's

progress. Consequently, this file should be left

until the complete restore is finished.

restore -if dump_file

This allows you to interactively look into a dump and select single

files or directories to be restored. ? shows the commands available here.

Often, when you just want to get back

some older versions of a file, this is the most useful tool. However,

when implementing partial incremental backups as shown above, you only

have backed up versions of the last seven days and of the initial

dump. So if you need more, respect that when defining your strategy.

restore -xf dump_file

This extracts single files or directories instead of doing a full

restore, so it also creates no restoresymtable.

And finally,

restore -ruf dump_file

does a full restore, but can be used on a populated file system. It

unlinks and therefore replaces files by the versions from the backup.

So it can be used to try and repair a file system.

By the way, when applying an incremental backup after a full restore,

the files to be replaced by the increment are automatically unlinked before, so

this also works as expected without any need to specify the -u argument.

When restoring a backup done with the strategy sketched out above,

start with the (latest) level 0 dump, then work through all newer

dumps leaving out each one where a newer dump with lower level exists.

The dates and other infos about each dump file can be extracted from

output of restore -t, or interactively by using the what command

in restore -i. For example, when dumps were generated with order 0

3 2 4, you'll find that for level 3 dump a newer one with lower level

exists (number 2), so 3 is left out. The only one with lower level

than 2 is the older 0, so you choose 2. 4 has also only lower ones

with older dates, so 4 is also choosen, giving the restore order 0 2

4.

Some more notes

You can exclude files or directories from the backup by setting the

nodump flag. ls -o shows the current flags. Set nonodump to

remove a flag.

chflags nodump file-or-dir ls -o chflags nonodump file-or-dir

By default, the nodump flags are honored for incremental backups

starting with level 1, but you can change this with the dump -h

option. I'm setting this to 0 to always have the flags honored.

dump 0a -h 0 -f /tmp/backup.1 /home

For example, I'm using this to exclude /usr/pkgsrc from the backup.

Otherwise, you can also specify a list of paths, when only a subset of

a file system should be backed up. When doing this, dump(8) is always

doing a full level 0 backup of the given directories.

When a long dump is running, you can send a SIGSTATUS to the dump

process to make it report it's progress. For example, when the status

control character is mapped to CTRL-T via stty(1), a dump process running in the

foreground reports the progress when pressing that (restore also).

If you are manually doing backups, besides looking at /etc/dumpdates

you can use dump -w to show the file systems currently to be dumped.

Otherwise, you can always use dump -W to show the last dump times and

levels of all dumped file systems. dump(8) is also integrated into the

housekeeping concepts of NetBSD insofar, as this output is included into the

daily(5) maintainance tool.

The dump frequency in days, used to determine which file systems need

to be dumped next, can be defined in /etc/fstab's fifth entry.

But when using snapshots, the devices actually dumped are not listed

in fstab, so this mechanism isn't working. Automating the backup in a

crontab and defining dump entries with adjacent frequencies can

mitigate this.

An example session

Here is an example of mounting/unmounting an afp backup device, handling a file system snapshot and doing a full dump.

mount_afp afp://:passwd@host/path /mnt/backup fssconfig -cv fss0 / /snapshot mount /dev/fss0 /mnt/dev dump -0ua -h 0 -f /mnt/backup/dumpfile.0 /mnt/dev umount /mnt/dev fssconfig -u fss0 rm /snapshot afp_client unmount /mnt/backup

To do a restore, you would use the same sequence, replacing the dump

command perhaps with an interactive restore:

restore -if /mnt/backup/var

Putting the pieces together

Most of this is put together into a bash script, backup.sh (see

below at the end).

When sourced, it provides some commands

to support handling snapshots, mounting of the backup device,

making an incremental backup following a configured strategy and

accessing/restoring from the backup device. For example, after

modifying the conf file to your needs, a manual

initial level 0 dump can be done like this:

. /root/bin/backup.sh && backup - 0

An interactive restore session of the last level 5 dump is done by this:

. /root/bin/backup.sh && restoredump - -i 5

The script includes an example on how to automate daily backups by calling it via crontab, saving the output to a log and mailing it to root.

At the end…

After a few days of automatic backups, this setup seems to work quite reliably. The files are rotated and replaced in the expected order, looking at the contents with interactive restore and doing a test recovery, everything looks good. Having set up this kind of backups gives some confidence—now lets make sure continuously this actually is justified..

Other, more sophisticated means of data security include usage of zfs or raids, which one day may be topic of further explorations..

As a side note, while experimenting with dump(8) and restore(8),

I stumbled upon

the last dump made on my NeXTStep System some decades ago. And,

believe it or not, the restore(8) command on 2020's NetBSD is still

able to read that old dump format. So when I'll find some more time, I

hope to restore it into a virtualized NeXTStep reincarnation. That

would be a recovery strategy having been worked out really well!

Feel free to leave a comment on Reddit

Appendix: the backup script

Take caution as this is not yet well enough tested—just use it as a simple example. For example, make sure that two dumps of different file systems don't run at the same time. Otherwise, the first one finishing will unmount the backup device, making the second one fail.

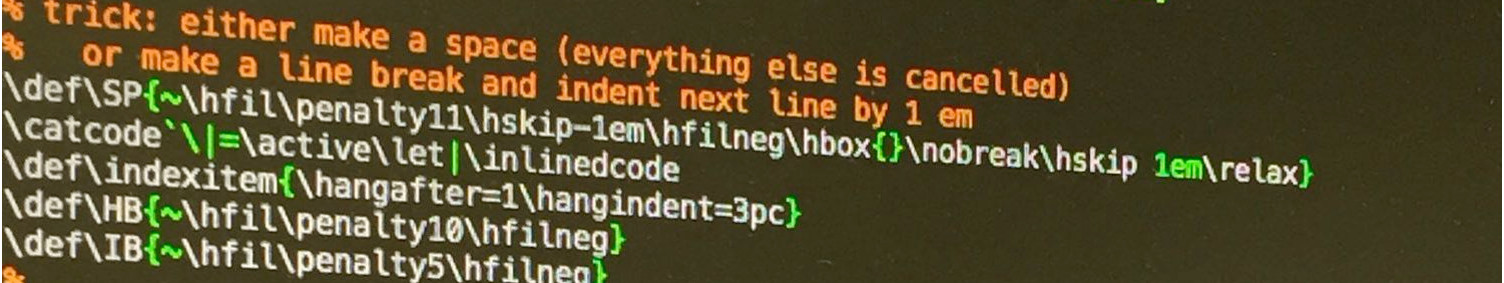

#!/usr/pkg/bin/bash # copy and adapt the config vars into ~/etc/backup.conf # put auth info like DUMPDEVPWD into ~/.backup.conf # and set it chmod 400 and chflags nodump # install as root crontab like this: # # daily backups # 0 1 * * * /usr/pkg/bin/bash -c '. /root/bin/backup.sh && backup /root/etc/backup-var.conf' 2>&1 | tee /var/log/backup-var.out | sendmail -t # 30 1 * * * /usr/pkg/bin/bash -c '. /root/bin/backup.sh && backup' 2>&1 | tee /var/log/backup.out | sendmail -t # uncomment to test backup config #TEST=echo # define this when dump device must be mounted DUMPDEV=afp://:${DUMPDEVPWD}@timecapsule/ DUMPMNT=/mnt/bkup # unset if you dont want a snapshot FSS=fss0 SRCDEV=/ SNAPSHOT=/tmp/snapshot # mountpoint of fs to backup SRCMNT=/mnt/dev # backup device or file BACKUP=${DUMPMNT}/client/dump #BACKUP= # backup levels for each day of month LEVELS=(- 0 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2) dumpcmd() { ${TEST} dump $* } restorecmd() { ${TEST} restore $* } export PATH=$PATH:/usr/pkg/bin # absolute paths to use in root crontab test -f /root/.backup.conf && . /root/.backup.conf test -f /root/etc/backup.conf && . /root/etc/backup.conf backup_dev() { if [ "${DUMPMNT}-" != "-" ]; then case "$1" in mount) ${TEST} mount_afp ${DUMPDEV} ${DUMPMNT} ;; unmount) ${TEST} afp_client unmount ${DUMPMNT} ;; esac fi } # when the time capsule needs to spin up, mount seems to fail # so a second try is done mount_backup() { backup_dev mount if [ $? -eq 2 ]; then backup_dev mount fi } snapshot() { if [ "${FSS}-" != "-" ]; then case $1 in new) ${TEST} fssconfig -c ${FSS} ${SRCDEV} ${SNAPSHOT} 512 10485760 ${TEST} mount -r /dev/${FSS} ${SRCMNT} ;; rm) ${TEST} umount ${SRCMNT} ${TEST} fssconfig -u ${FSS} ${TEST} rm -f ${SNAPSHOT} ;; esac fi } find_level() { # find level for today LEV=${LEVELS[`date '+%e'`]} if [ "${1}-" != "-" ]; then LEV=${1} fi if [ "${BACKUP}-" != "-" ]; then BACKUPFILE=${BACKUP}.${LEV} BOUT= else BACKUPFILE= BOUT=- fi } # makedump lev makedump() { find_level ${1} # save prev lev 0 dump as prevmonth if [ ${LEV} -eq 0 ]; then test -f ${BACKUPFILE}.prevmonth && rm ${BACKUPFILE}.prevmonth test "${BACKUPFILE}-" != "-" && test -f ${BACKUPFILE} && mv ${BACKUPFILE} ${BACKUPFILE}.prevmonth fi # save prev lev 1 dump as prevweek if [ ${LEV} -eq 1 ]; then test -f ${BACKUPFILE}.prevweek && rm ${BACKUPFILE}.prevweek test "${BACKUPFILE}-" != "-" && test -f ${BACKUPFILE} && mv ${BACKUPFILE} ${BACKUPFILE}.prevweek fi # all other levs: rm instead of overwriting if [ ${LEV} -gt 1 ]; then test "${BACKUPFILE}-" != "-" && test -f ${BACKUPFILE} && rm ${BACKUPFILE} fi # and dump dumpcmd ${LEV}ua -h 0 -f ${BACKUPFILE}${BOUT} ${SRCMNT} } mailheader() { echo "To: root" printf "Subject: %s backup dump output for %s\n\n" `hostname` "`date`" } # backup [conf [lev]] backup() { mailheader # check for custom conf test $# -gt 0 && test -f ${1} && . ${1} # check for lev test $# -gt 1 && LEV=${2} || LEV= find_level $LEV snapshot new # backup_dev mount mount_backup makedump $LEV backup_dev unmount snapshot rm } # restoredump [conf [args [lev]]] restoredump() { # check for custom conf test $# -gt 0 && test -f ${1} && . ${1} # check for args test $# -gt 1 && ARGS=${2} # check for lev test $# -gt 2 && LEV=${3} || LEV= find_level $LEV # backup_dev mount mount_backup restorecmd ${ARGS} -f ${BACKUPFILE}${BOUT} backup_dev unmount }

..and an example of a backup.conf showing how to dump/restore using ssh pipes:

# comment to really do backups TEST=echo # define this when dump device must be mounted DUMPDEV=afp://:${DUMPDEVPWD}@timecapsule/ DUMPMNT=/mnt/bkup # unset if you dont want a snapshot FSS=fss0 SRCDEV=/ SNAPSHOT=/tmp/snapshot # mountpoint of fs to backup SRCMNT=/mnt/dev # backup device or file BACKUP=${DUMPMNT}/client/dump #BACKUP= # backup levels for each day of month LEVELS=(- 0 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2 5 4 7 6 1 3 2) # uncomment this to define custom commands #dumpcmd() { # dump $* | ssh timecapsule dd of=/Volumes/dk2/ShareRoot/client/dump.${LEV} #} #restorecmd() { # ssh timecapsule dd if=/Volumes/dk2/ShareRoot/client/dump.${LEV} | restore $* #}